About me

Testing AI and a history of making actual robots smarter.

With the proliferation of new AI applications and the graduation of these systems out of research labs, one is left wondering how well exactly would these AI modules perform in the real world? I co-founded Efemarai to answer this question and to ensure we do the same rigorous testing of AI systems as any other established industry.

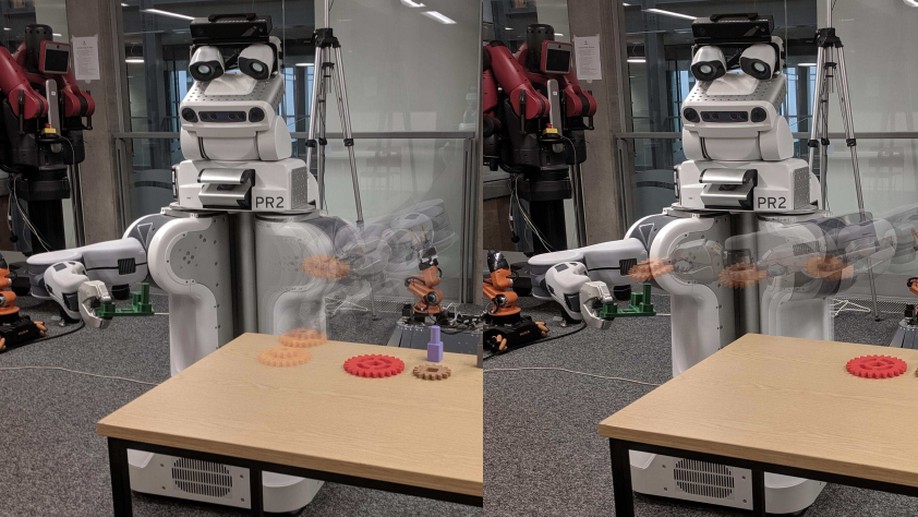

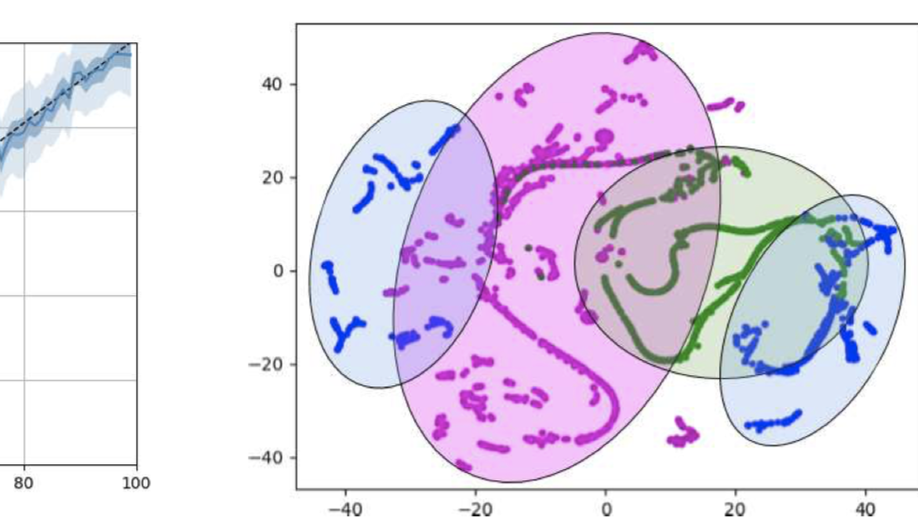

Previously, researching how just a few expert demonstrations, alongside a causal view of the world, can be used to learn difficult, yet robust and safe, long-horizon behaviours for agents. Using hierarchical learning as a tool for the robot to understand the underlying problem structure. Pushing towards interpretable, explainable, cause-effect based machine learning for robotics.

I was a PhD student at the Robust Autonomy and Decisions group, part of the Insitute for Perception, Action and Behaviour at the University of Edinburgh. I was supervised by Prof. Subramanian Ramamoorthy and Dr. Kartic Subr.

Building AI, robots and liquid rocket engines.

Interests

- Artificial Intelligence

- Causality

- Robotics

- Computer Vision

Education

-

PhD in Robotics and Autonomous Systems, 2020

University of Edinburgh

-

MEng in Robotics, 2015

University of Reading